Over the past few weeks, I've been toying with lambda functions and thinking about using them for more than just APIs. I think people miss the most interesting aspect of serverless functions -- namely that they're massively parallel capability, which can do a lot more than just run APIs or respond to events.

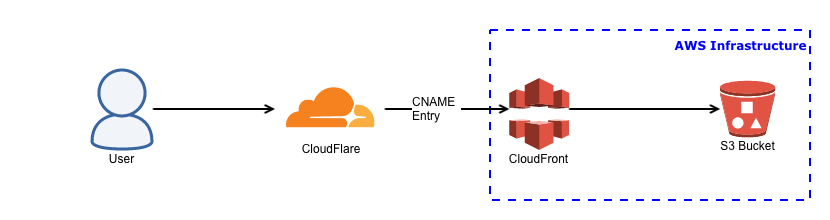

There's 2-ways AWS let's you run lambdas, either via triggering them from some event (e.g. a new file in the S3 bucket) or invoking them directly from code. Invoking is a game-changer, because you can write code, that basically offloads processing to a lambda function directly from the code. Lambda is a giant machine, with huge potential.

What could you do with a 1000-core, 3TB machine, connected to a unlimited amount of bandwidth and large number of ip addresses?

Here's my answer. It's called potassium-40, I'll explain the name later

So what is potassium-40

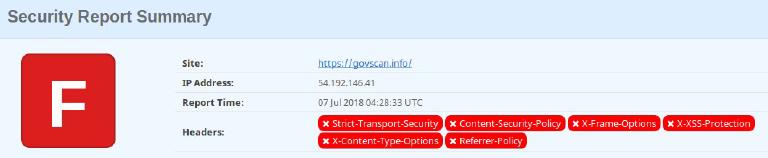

Potassium-40 is an application-level scanner that's built for speed. It uses parallel lambda functions to do http scans on a specific domain.

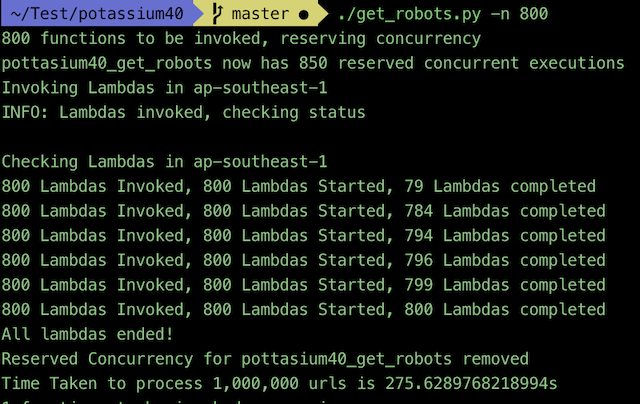

Currently it does just one thing, which is to grab the robots.txt from all domains in the cisco umbrella 1 million, and store the data in the text file for download. (I only grab legitimate robots.txt file, and won't store 404 html pages etc)

This isn't a port-scanner like nmap or masscan, it's not just scanning the status of a port, it's actually creating a TCP connection to the domain, and performing all the required handshakes in order to get the robots.txtfile.

Scanning for the existence of ports requires just one SYN packet to be sent from your machine, even a typical banner grab would take 3-5 round trips, but a http connection is far more expensive in terms of resources, and requires state to be stored, it's even more expensive when TLS and redirects are involved!

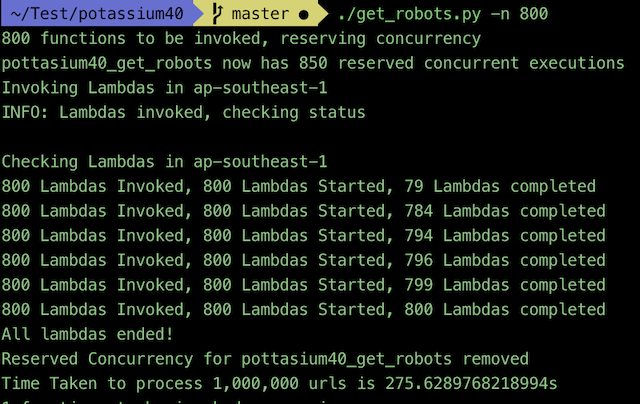

Which is where lambda's come in. They're effectively parallel computers that can execute code for you -- plus AWS give you a large amount of free resources per month! So not only run 1000 parallel processes, but do so for free!

A scan of 1,000,000 websites will typically take less than 5 minutes.

But how do we scan 1 million urls in under 5 minutes? Well here's how.