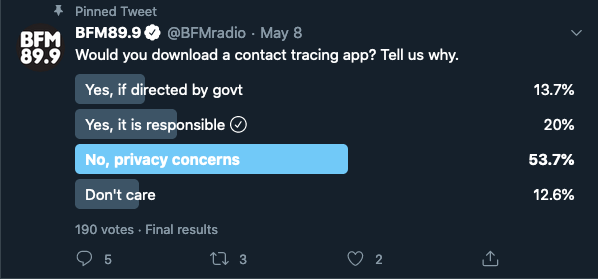

I thought I'd write down my thoughts on contact tracing apps, especially since a recent BFM suggested 53% of Malaysians wouldn't download a contact tracing app due to privacy concerns. It's important for us to address this, as I firmly believe, that contact tracing is an important weapon in our arsenal against COVID-19, and having 54% of Malaysians dismiss outright is concerning.

But first, let's understand what Privacy is.

Privacy is Contextual

Privacy isn't secrecy. Secrecy is not telling anyone, but privacy is about having control over who you tell and in what context.

For example, if you met someone for the first time, at a friends birthday party, it would be completely rude and unacceptable to ask questions like:

- What's your weight?

- What's your last drawn salary?

- What's your age?

In that context you're unlikely to find someone who will answer these questions truthfully.

But...

Age and weight, are perfectly acceptable questions for a Doctor to ask you at a medical appointment, and your last drawn salary is something any company looking to hire you will ask. We've come to accept these questions as OK -- under these contexts.

You might still not want to answer them, which might mean you don't get the job, or the best healthcare -- but you certainly can't be concerned by them. Far more people will answer these same questions truthfully if you change the context from random stranger at a party to doctors appointment.

So privacy is contextual, to justify concerns we have to evaluate both the context and the question before coming to a conclusion.

So let's look at both, starting with the context: